Unlike traditional statistical models built for narrow tasks, these models generalize across multiple biomolecular tasks—for example, protein folding, DNA editing, molecular docking, and even cellular phenotypes. By encoding biological complexity into rich, learned representations, they can predict interactions, generate novel molecules, and guide experiments—even in data-scarce or previously intractable domains. This unlocks new capabilities in therapeutic design, functional genomics, and biomolecular engineering, shifting science from slow, brute-force workflows to fast, feedback-driven design loops. In short: AI can now learn biology and chemistry and help design what’s next.

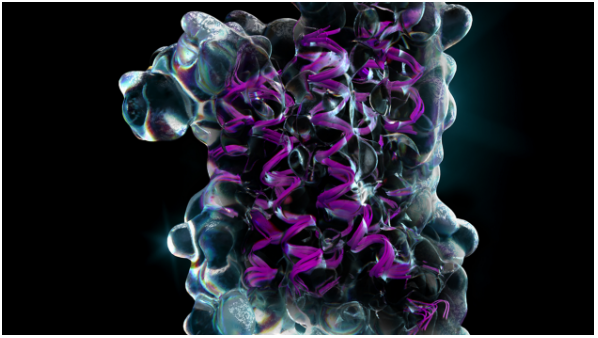

Protein foundation models—billions-parameter transformers like AlphaFold 3, ESM-3, Proteína, and Pallatom—collapse separate pipelines for fold prediction, mutational scanning, docking, and de-novo design into one promptable engine. Driven by scale (massive data/params), multimodality (joint sequence-structure-ligand embeddings), and controllability (prompting or quick fine-tunes), they have the potential to turn weeks of labwork or code into minutes of inference, reshaping protein R&D into a software-first workflow.

Soon, these models will move beyond folding to full-scale fabrication—designing multi-chain complexes, metabolic pathways, and even adaptive biomaterials on demand. Expect three currents to drive that future: continued scaling toward trillion-token training sets that capture rare folds; deeper cross-modal fusion that knits together cryo-EM maps, single-cell readouts, and reaction kinetics; and plug-and-play adapters (action layers) that translate a model’s coordinates directly into DNA constructs or cell-free expression recipes. Realizing this vision will require shared, high-quality structural and functional datasets, open benchmarking suites for generative accuracy and safety, and compute-efficient methods so labs and startups—not just hyperscalers—can iterate at foundation-model speed.

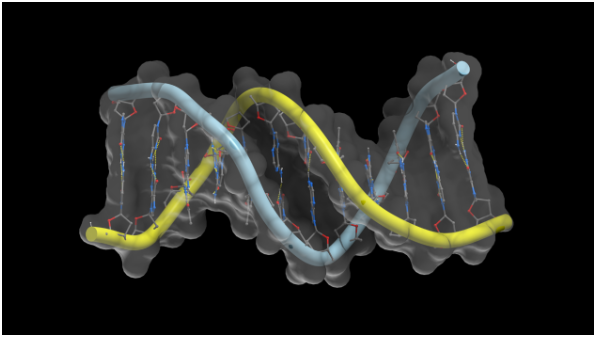

These models are already topping benchmarks for variant effect prediction and single-cell annotation, but they still cover only a slice of genome biology today. Their recipe for progress so far is simple but powerful: massive scale (billions of DNA tokens + transformer parameters), self-supervised transfer (pretraining on omics data, then light fine-tuning), and for some models, multimodality (merging sequence, chromatin, and single-cell readouts in one model). As open datasets grow and GPU-efficient training improves, expect these “genomic foundation models” to become a standard layer in every life-science tech stack.

Next comes the era of genome-scale AI co-pilots: Studies like Geneformer and Evo 2 show evidence that transformer models can not only predict but also design useful CRISPR edits, de-novo promoters, and regulatory circuits entirely in silico. Emerging architectures like HyenaDNA, GenSLM, and Longformer-DNA can extend context windows beyond 1 Mbp, capturing 3D chromatin loops and long-range gene regulation. Eventually, multi-omic data can incorporate methylation, ATAC-seq, and spatial RNA onto sequence embeddings for richer biological insight. These advances will power real-time clinical variant triage, high-throughput enhancer discovery, and one-day, new therapeutic design approaches like programmable cell therapy, all from a single “genomic foundation model” API. Delivering that future demands open, privacy-safe genome datasets, standardized zero-shot benchmarks, and next-generation compute infrastructure and software that make trillion-token pretraining affordable outside hyperscale labs.