Building on the recently released open models and data of the NVIDIA Nemotron 3 Series, NVIDIA has launched Nemotron Speech, Multimodal Retrieval-Augmented Generation (RAG), and Safety models.

Nemotron Speech features a suite of state-of-the-art open models including a new ASR model, delivering real-time, low-latency speech recognition for live captioning and voice AI applications. Benchmark tests for daily and modal use cases show its performance is 10x better than comparable models.

The Nemotron Safety models boost the security and trustworthiness of AI applications, currently including the Llama Nemotron Content Safety Model with extended language support and the Nemotron PII Model for high-accuracy sensitive data detection.

Bosch is leveraging Nemotron Speech to enable driver-vehicle interaction. ServiceNow trains its Apriel Series models on open datasets including Nemotron to achieve cost-effective multimodal performance.

Cadence and IBM are piloting the NVIDIA Nemotron RAG models to improve the retrieval and reasoning capabilities for complex technical documentation.

CrowdStrike, Cohesity and Fortinet are utilizing the NVIDIA Nemotron Safety models to strengthen the trustworthiness of their AI applications.

Palantir is integrating Nemotron models into its Ontology framework to develop a pioneering technical stack for enterprise AI agent integration. CodeRabbit is powering and scaling its AI code review system with Nemotron models, increasing speed and cost efficiency while maintaining high review accuracy.

NVIDIA has also released a range of open-source datasets, training resources and blueprints for developers, including the dataset and training code for the Llama Embed Nemotron 8B Model—a top performer on the MMTEB leaderboard. Additionally, NVIDIA has launched an updated LLM Router that guides developers on automatically routing AI requests to the most suitable model, as well as datasets for building new Nemotron Speech ASR models.

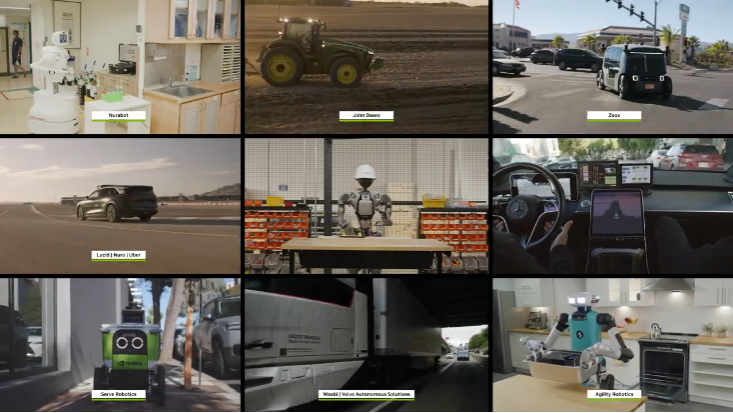

NVIDIDeveloping physics-based AI for robotics and autonomous systems requires vast, diverse datasets and models capable of perceiving, reasoning and acting in complex real-world environments. On the Hugging Face platform, robotics is one of the fastest-growing fields, where NVIDIA’s open-source robotic models and datasets top the download rankings.

NVIDIA has launched the open-source NVIDIA Cosmos World Foundation Model, which accelerates the development and validation of physics-based AI through human-like reasoning and world generation capabilities.

Cosmos Reason 2 is an all-new state-of-the-art reasoning VLM that empowers robots and AI agents to achieve higher-precision visual perception, understanding and interaction in the physical world.

Cosmos Transfer 2.5 and Cosmos Predict 2.5 are two leading models that generate large-scale synthetic video across a wide range of diverse environments and conditions.

Building on the Cosmos platform, NVIDIA has also released open-source models and blueprints for all types of embodied physics-based AI:

Isaac GR00T N1.6 is an open-source reasoning Vision-Language-Action (VLA) model built exclusively for humanoid robots, enabling full-body control while enhancing reasoning and contextual understanding with NVIDIA Cosmos Reason.

The NVIDIA Blueprint for video search and summarization, part of the NVIDIA Metropolis platform, serves as a reference workflow for building vision AI agents. These agents can analyze massive volumes of recorded and real-time video to boost operational efficiency and maintain environmental order.

Salesforce, Milestone, Hitachi, Uber, VAST Data and Encord are leveraging Cosmos Reason to develop AI agents for transportation and workplace productivity. Franka Robotics, Humanoid and NEURA Robotics use Isaac GR00T to simulate, train and validate new robotic behaviors prior to production deployment.